GPU Driver Internals

This page will detail the internals of various GPU drivers for use with I/O Virtualization.

An absence of critical technical documentation has historically slowed growth and adoption of developer ecosystems for GPU virtualization.

This CC-BY-4.0 licensed content can either be used with attribution, or used as inspiration for new documentation, created by GPU vendors for public commercial distribution as developer documentation.

Where possible, this documentation will clearly label dates and versions of observed-but-not-guaranteed behaviour vs. vendor-documented stable interfaces/behaviour with guarantees of forward or backward compatibility.

Article Structure

This article will aim to provide information about the following details of GPU drivers:

High Level Architecture

This section will detail high level architectures of each driver.

Initialization

This section will cover how the GPU's embedded components, the GPU driver, and virtualization functions are initialized.

Scheduling

This section will detail how the device schedules instructions for execution.

This will attempt to provide a comprehensive view of scheduling from virtualized processes down to execution within the device.

In-VM Scheduling

The In-VM Scheduling section will detail how instructions scheduled within the virtual machine's GPU device driver.

Between-VM Scheduling

The Between-VM Scheduling section will detail how the host kernel module and/or virtual GPU helper functions handle scheduling / context swaps between virtual machines on a computer system.

Firmware Scheduling (if applicable)

The Firmware Scheduling section will cover the GPU's internal scheduling model if a deferred execution pathway is used (like i915's GuC or OpenRM's GSP).

In the case an intermediate scheduling microcontroller is not used this section may be less applicable (ie: vExeclist scheduling under Intel vGPUs).

Memory Management

The Memory Management section will cover how the GPU driver manages memory for virtual GPUs and host processes.

This will aim detail paging abstractions used for global memory translation, and per-process memory translation.

Display Surface Virtualization

The Display Surface Virtualization section will detail how virtual displays are provided to guests and/or graphics rendering buffers (pixel surfaces) are shared from guest to host if such functions are provided via the driver.

Graphics Buffer Sharing

This section will cover methods provided by the GPU driver suitable for high performance graphics sharing.

Vendor Neutral

This section will detail functions in common between GPU drivers.

Also see Display Virtualization for Windows OS.

Knowledge Resources Used

This section is supported by significant contributions to documentation and open source by Satyeshwar Singh.

Display Surface Virtualization

The Display Surface Virtualization section will detail how virtual displays are provided to guests and/or graphics rendering buffers (pixel surfaces) are shared from guest to host if such functions are provided via the driver.

Graphics Buffer Sharing

This section will deal with graphics buffer sharing for vendor neutral GPUs.

Display Flow in Linux

Userspace applications provide their buffers to compositor.

Compositor asks Mesa to create a framebuffer.

Framebuffer is allocated through vendor driver.

Vendor driver flips the framebuffer on the screen.

SR-IOV and Mdev

SR-IOV VFs don't have access to the display controller (only PF does).

Known issue is displaying a VF's framebuffer on the screen.

VFs in QEMU Hypervisor (VirtIO-GPU)

SR-IOV and Mdev VFs run in QEMU Hypervisor.

QEMU has full access to all pages in VF's address space.

VirtIO-GPU allows for allocating buffers (not API remoting in this case).

Mesa's KMSRO allocates framebuffer via virtio-gpu.

Mesa's KMSRO asks vendor driver to import framebuffer (no specific compositor dependancy).

Transport

A Scatter Gather List (SGL) of physical pages is constructed by VirtIO-GPU of VF.

QEMU Host-Side u-dma-buf

Host QEMU uses u-dma-buf driver to reconstruct a virtual memory pointer from the SGL shared by VF.

u-dma-buf driver allocates contiguous memory blocks in kernel as DMA buffers.

QEMU Host-Side Modules

Once virtio-qemu has a DMA buffer, it shares it with the QEMU UI.

QEMU UI has several toolkits to support like GTK, SDL, etc. with GTK. being the default.

GTK uses EGL and passes it the DMABUF as a texture.

Windows VFs

Windows OS doesn't support DMABUF capability so we can't allocate buffers from VirtIO-GPU and share them with the Windows Kernel Mode Driver (KMD).

Windows OS is different in the way that the FB is allocated via the OS rather than the miniport driver.

Modified version of RedHat virtio-gpu-do (short for Display Only) driver used.

Windows Driver Stack

OS (DWM) allocates frame buffers and asks GPU to write to them through Miniport.

DVServer UMD (IDD) asks the OS for the FB copy.

DVServer UMD (IDD) passes the virtual memory address to DVServer KMD.

DVServer KMD

DVServer KMD finds the SGL (Scatter Gather List) of physical pages for this virtual memory address.

This SGL is passed via virt-queue from the VF to the host QEMU.

Android VFs

Android uses Linux kernel underneath. VirtIO-GPU is present in Android's kernel.

KMSRO patch to allocate framebuffer via VirtIO-GPU also ported over to Android.

Need modifications in the minigbm lib of Android to connect with KMSRO part.

Rest of stack looks identical to Linux VF/PF.

i915

Knowledge Resources Used

This section is supported by significant contributions to documentation and open source by Zhi Wang, Ben Widawsky, and Igor Bogdanov.

See references 2, 3, 4, 5, 6, 7, 8, and 9 in the References (Talks & Reading Material) section.

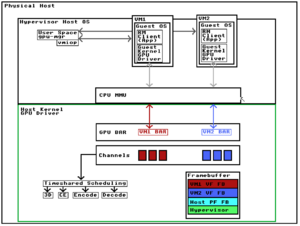

High Level Architecture

i915 Clients

Processes which make use of the Intel i915 driver receive an i915 Client ID.

Initialization

During initialization of the i915 driver the GuC binary blob is offloaded into the Graphics Translation Table (GTT). This allows the GuC to read GTT-loaded binary blob from shared framebuffer memory so that it may boot.

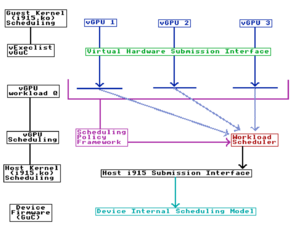

Scheduling

There are several abstractions for GPU virtual machine scheduling. Those are as follows:

- Guest kernel (i915.ko)

- Host kernel (i915.ko)

- Device Firmware (GuC)

In-VM Scheduling

Guest kernel (i915.ko)

vExeclist

The vExeclist is a method to submit commands directly to the GPU without the use of an intermediate microcontroller.

vGuC

vGuC is a command submission interface used to process commands to the Intel Graphics Microcontroller (GuC).

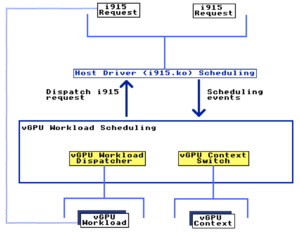

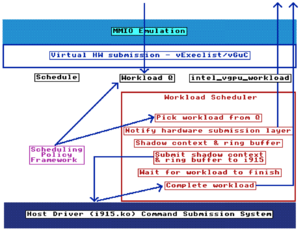

Between-VM Scheduling

Host kernel (i915.ko)

Submitting commands to the GPU under i915 can take several paths. One pathway makes use of direct command submission without an intermediate micro-controller whereas the other uses an intermediate micro-controller. The intermediate micro-controller approach increases the amount of binary-blob code used and abstracts the kernel module from the device's internal scheduling model.

Execlist

Execlist executes commands synchronously on the device without an intermediate microcontroller. This is the preferred method of executing commands by some driver developers because of it's stability and transparency under current i915 development.

GuC

GuC provides an execution pathway with an intermediate microcontroller providing a scheduling abstraction for Intel's preferred internal scheduling model.

Device Firmware (GuC)

Memory Management

Translation Tables

GTT (Graphics Translation Table)

GPU Memory on-device is a part of a GTT or Graphics Translation Table. This table stores information globally for all graphics processes within the system. Some processes access the Global Graphics Translation Table (GGTT) such as DRI while other's receive a Per Process Graphics Translation Table (PPGTT) buffer based on their i915 Client ID.

GGTT (Global Graphics Translation Table)

PPGTT (Per Process Graphics Translation Table)

Process-specific memory buffers are stored inside a Per Process Graphics Translation Table or PPGTT. This is a GPU MMU translated subregion or IOVA of global GPU memory specific to a GPU process's client ID.

Aliasing PPGTT

Aliasing PPGTT (Per Process Graphics Translation Table) refers to partially separated GPU resources (process context).

Real PPGTT

Real PPGTT (Per Process Graphics Translation Table) refers to fully separated GPU resources (process context).

Display Surface Virtualization

The Intel vGPU makes use of two modes for Display Surface Virtualization.

- VirtIO-GPU (Linux)

- Indirect Display Driver (Windows)

VirtIO-GPU

IDD (Indirect Display Driver)

The IDD (Indirect Display Driver) provides virtual display functions with arbitrary resolutions to any rendering device virtual or physical.

Intel's IDD can be found in their Display Virtualization for Windows OS repository.

Graphics Buffer Sharing

Intel's i915 driver provides functionality to directly map display memory from a guest vGPU Virtual Function (either SR-IOV or VFIO-Mdev) into the host GPU's Physical Function without slow memory copies or graphics compression. For users running GPU virtualization on their local device this results in a significant performance uplift compared to traditional graphics sharing functionality built for remote access use-cases such as VDI.

Intel's 0copy display virtualization tools are simple to implement as sharing does not rely upon an added IVSHMEM (Inter-VM Shared Memory device) - rather the host directly maps the guest's display memory via udmabuf as the buffer sharing functions are provided within the i915 open source driver.

OpenRM

Knowledge Resources Used

This section is supported by significant contributions to documentation and open source by Andy Currid, Neo Jia, John Fanelli, and Neha Joshi.

High Level Architecture

The Open Resource Manager (RM driver) makes use of a highly object oriented paradigm comprised of multiple "engines" which act as micro-services for servicing driver requests.

The Open Resource Manager driver (also known as OpenRM) refers to Nvidia's open-kernel-modules.

Broadly speaking the OpenRM driver consists of two parts.

- The Platform RM (OpenRM)

- The Firmware RM (GSP RM / RM Core)

The Platform RM is loaded into the Linux Kernel as nvidia.ko. This module communicates with the GSP RM for via Remote Procedure Calls (RPCs) to communicate with engines from the RM Core.

RM Clients

Processes (local, remote, or virtualized) which make use of the RM driver receive an RM Client ID.

RM Server

The RM Server (or Resource Server) tracks RM Clients as well as the hardware and software resources they control, allocate, and free.

RM API

API to control the Resource Manager Server.

RM Core

Core functions of the RM driver controlling resource locking, mapping, unmapping, control calls, constructors, and deconstructors. Under OpenRM the RM Core runs within the GPU System Processor (GSP micro-controller) while in pre-open source versions of the Resource Manager the RM Core ran within the nvidia.ko kernel module

Initialization

During bring up of the hardware several binary blobs are loaded from embedded Boot ROM memory to bootstrap embedded controller bring up from which point additional software is loaded from onboard SPI flash memory.

Software loaded from SPI flash is necessary for the full initialization of the Falcon/NvRISC processor as well as a cached version of the software necessary to run the GPU System Processor (GSP).

Once the platform is posted it is ready to communicate with the host platform's RM driver. The OpenRM driver offloads a binary blob containing the RM Core to the GPU System Processor (GSP) which is likely to contain a more recent version than the cached version contained in on-board SPI flash.

Scheduling

There are several abstractions for GPU virtual machine scheduling. Those are as follows:

- Guest kernel (nvidia.ko)

- Host kernel (nvidia.ko)

- Host usermode (gpu-mgr / libnvidiavgpu.so)

- Device Firmware (GSP)

Command Submission

Runlist

In-VM Scheduling

Virtual machines contain their own GPU scheduling within the Nvidia kernel module in the guest OS.

Guest kernel (nvidia.ko)

Within a virtual machine running the Nvidia driver messages to the GPU are first sent to the guest nvidia.ko kernel module.

The guest then determines whether a vRPC (virtual Remote Procedure Call) or a pRPC (physical Remote Procedure Call) should be sent. Both pRPCs and vRPCs are sent through the host RM driver (Resource Manager).

Note: Unclear on execution pathway for pRPCs vs vRPCs. pRPCs may go directly to device.

Between-VM Scheduling

In addition to scheduling which occurs within the virtual machine the Resource Manager driver also schedules messages to the GPU between GPU-accelerated virtual machines and host processes.

Host kernel (nvidia.ko)

Messages sent by the guest (via vRPC or pRPC) are received by the host Nvidia.ko driver.

Nvidia.ko contains a virtual GPU state machine which contains status information for the virtual GPU.

Nvidia.ko also contains a virtual GPU kernel scheduler which interacts with virtual GPU objects.

Nvidia.ko also contains an RM Call scheduler which schedules calls on an RM class.

The Nividia.ko kernel module exits to userspace to execute the nvidia-vgpu-mgr and VMIOP (Virtual Machine Input Output Plugin).

Host usermode (nvidia-vgpu-mgr / libnvidia-vgpu.so)

After exiting to userspace a daemon process (the nvidia-vgpu-mgr, and it's library libnvidia-vgpu.so) are executed to schedule VM-exits described below.

nvidia-vgpu-mgr

The nvidia-vgpu-mgr is a process which provides the spawning of virtual GPU stubs and population with capability information.

This daemon process interacts with the libnvidia-vgpu.so, nvidia.ko, and nvidia-vgpu-vfio.ko components.

This process and the libnvidia-vgpu.so contain, and execute the VMIOP.

This process (and the VMIOP) schedules RPCs sent by the guest, receives VFIO BAR-exits, and relays requests to allocate, deallocate, pin, unpin, map, unmap to the RM Core.

vmiop

VMIOP (Virtual Machine Input Output Plugin) handles presenting virtualized functionality into the guest.

The Virtual Machine Input Output Plugin software handles virtual displays, compute API offload, and most importantly BAR (Base Address Register) quirks.

The VMIOP is an SDK (Software Development Kit) provided in binary format split between the libnvidiavgpu.so and nvidia-vgpu-mgr which provides userland helper functions for GPU virtualization.

Messages to the VMIOP are scheduled by Linux kernel niceness (scheduling abstraction).

Device Firmware (GSP)

Messages received by the host RM driver (Resource Manager) are then scheduled by the RM Core contained within the GSP (GPU System Processor). The GSP handles the device's internal scheduling model.

Memory Management

Managing virtual machine memory is very important to the security of virtualization.

This section will cover the method by which secure memory "enclaves" or IO Virtual Addresses (IOVAs) may be provisioned and separation enforced by hardware constructs within the GPU.

Programming the MMU

In order to hardware enforce separation between memory allocated to Virtual Machines (VMs) virtualization software must program the GPU's MMU (GMMU controller) to create IO Virtual Addresses (IOVAs).

In order to create such configurations several abstractions are used to translate high level representations of virtualization programmed via the vmiop and gpu-mgr into practical, architecture specific instructions.

AMAPLibrary

The AMAPLibrary acts as a device abstraction framework for GPU driver software to program using high level representations of MMU configuration.

The AMAPLibrary translates high level representations of GPU virtualization into graphics architecture-specific logic contained within architecture HALs (Hardware Abstraction Layers).

Architecture HALs

GPU Hardware Abstraction Layers (HALs) contain logic specific to graphics architectures, for instance the precise method by which the GPU driver may interact with the Falcon to provision MMU protected memory.

DMA from Falcon / NvRISC-V to Frame Buffer Interface (FBIF)

The Falcon (FAst Logic CONtroller) / NvRISC-V embedded controller emits DMAs to the Frame Buffer Interface (FBIF) in order to interact with the device's GMMU controller.

User programs and VMs have their memory translated through the GMMU.

Once created memory translations within a virtual machine's IO Virtual Address (IOVA) will be protected by the device's GMMU controller.

Translation Tables

vmiop_gva

Display Surface Virtualization

amdgpu

References (Talks & Reading Material)

- Intel Graphics Programmer's Reference Manuals (PRM)

- i915: Hardware Contexts (and some bits about batchbuffers)

- i915: The Global GTT Part 1

- i915: Aliasing PPGTT Part 2

- i915: True PPGTT Part 3

- i915: Future PPGTT Part 4 (Dynamic page table allocations, 64 bit address space, GPU "mirroring", and yeah, something about relocs too)

- i915: Security of the Intel Graphics Stack - Part 1 - Introduction

- i915: Security of the Intel Graphics Stack - Part 2 - FW <-> GuC

- i915: An Introduction to Intel GVT-g (with new architecture)

- lwn.net: Add udmabuf misc device

- Nvidia RISC-v Story

- IOMMU Introduction

- Hardware and Compute Abstraction Layers For Accelerated Computing Using Graphics Hardware and Conventional CPUs

- nVidia GPU Introduction (envytools)

- Delivering High Performance Remote Graphics With Nvidia GRID Virtual GPU

- NVIDIA Multi-Instance GPU

- i915: Kaby Lake Intel Graphics Programmer's Reference Manual [Volume 5: Memory Views]

- Lifecycle of a Triangle - Nvidia's logical pipeline

- Nvidia Parallel Thread Execution (PTX)

- i915/GEM Crashcourse

- drm: Add GEM ("graphics execution manager") to i915 driver.