Virtual I/O Internals

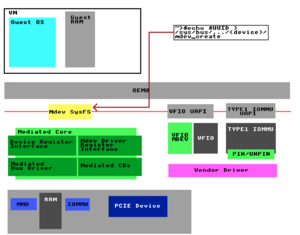

The following document details the internals of a VFIO (Virtual Function I/O) driven Shared I/O Device.

An absence of critical technical documentation has historically slowed growth and adoption of developer ecosystems for GPU virtualization.

This CC-BY-4.0 licensed content can either be used with attribution, or used as inspiration for new documentation, created by GPU vendors for public commercial distribution as developer documentation.

Where possible, this documentation will clearly label dates and versions of observed-but-not-guaranteed behaviour vs. vendor-documented stable interfaces/behaviour with guarantees of forward or backward compatibility.

This article places emphasis on the Virtual GPU (vGPU) use case however these concepts apply generically to virtualization of I/O devices (TPUs, NICs, storage peripherals, ect..).

| Mdev Mode | SR-IOV Mode | SIOV Mode |

|---|---|---|

| No hardware assistance needed. | Hardware assistance needed. | Hardware assistance needed. |

| Host requires insight about guest workload. | Host ignorance of guest workload. | Host requires insight about guest workload. |

| Error reporting. | No guest driver error reporting. | Error reporting. |

| In depth dynamic monitoring. | Basic dynamic monitoring. | In depth dynamic monitoring. |

| Software defined MMU guest separation. | Firmware defined MMU guest separation. | Firmware defined MMU guest separation. |

| Requires deferred instructions to be supported by host software (support libraries). | Guest is ignorant of host supported software such as support libraries. | |

| Routing interrupts. | Routing interrupts. | Routing interrupts. |

| Device reset. | Device reset. | Device reset. |

| Enable/Disable device. | Enable/Disable device. | Enable/Disable device. |

| Support for multiple scheduling techniques. | Support for multiple scheduling techniques. | Support for multiple scheduling techniques. |

| Single PCI requester ID. | Multiple PCI requester IDs. | Multiple PCI requester IDs. |

All Modes

This section will cover concepts which apply both to Mdev Mode, SR-IOV Mode & SIOV Mode.

Knowledge Resources Used

This section is supported by significant contributions in open source by Alex Williamson.

See references 2, 14, & 22 in the References (Talks & Reading Material) section.

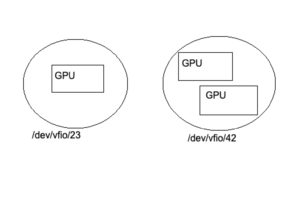

Binding VFIO devices

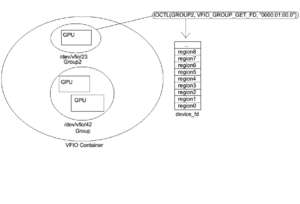

Figure 1: Binding devices to the vfio-pci driver results in VFIO group nodes.

Opening the file "/dev/vfio/vfio" creates a VFIO Container.

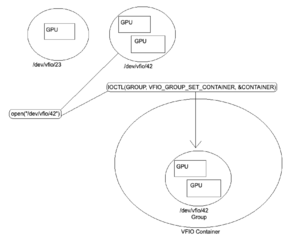

Figure 2: The interrupt routine IOCTL(GROUP, VFIO_GROUP_SET_CONTAINER, &CONTAINER) places the VFIO group inside the VFIO container.

Programming the IOMMU

When this has been done IOCTL(CONTAINER, VFIO_SET_IOMMU, VFIO_TYPE1_IOMMU) can then be used to set an IOMMU type for the container which places it in a user interactable state.

Once this IOMMU type state has been set and the VFIO container has been made interactable additional VFIO groups may be added to the container without requiring that the group's IOMMU type be set again as newly added groups automatically inherit the container's IOMMU context.

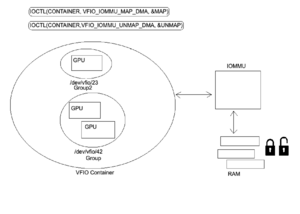

VFIO Memory Mapped IO

Figure 3: Once the VFIO Groups have been placed inside the VFIO container and the IOMMU type has been set the user may then map and unmap which will automatically inserts Memory Mapped IO (MMIO) entries into the IOMMU as well as pin/unpin pages as necessary. This can be accomplished using IOCTL(CONTAINER, VFIO_IOMMU_MAP_DMA, &MAP) for map/pin and IOCTL(CONTAINER, VFIO_IOMMU_UNMAP_DMA, &UNMAP) for unmap/unpin.

Getting the VFIO Group File Descriptor

Figure 4: Once the device has been bound to a VFIO driver, set in a VFIO container, the VFIO container has it's IOMMU type set, and a memory map/page pin of the VFIO device has been completed a file descriptor can then be obtained for the device. This file descriptor can be used for interrupts (ioctls), to probe for information about the BAR regions, and configure the IRQs.

VFIO device file descriptor

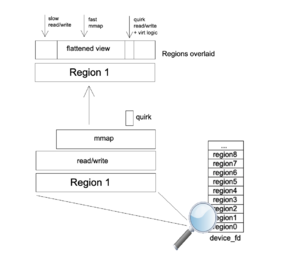

VFIO device file descriptors are divided into regions and each region is mapped into a device resource. Region count and info (file offset, allowable access, ect..) can be discovered through interrupt (IOCTL). Each file descriptor region corresponding to a PCI resource is represented as a file offset.

In the case of RPC Mode this structure is emulated whereas in SR-IOV Mode the structure is mapped to a real PCI resource.

| 00:00.0 VGA compatible controller |

|---|

| Region 0 Bar0 (Config Space) starts at offset 0 |

| Region 1 Bar1 (MSI - Message Signaled Interrupts) |

| Region 2 Bar2 (MSIX) |

| Region 3 Bar3 |

| Region 4 Bar4 |

| Region 5 Bar5 (IO port space) |

| Expansion ROM |

Below is what the file offsets looks like internally for each BAR region starting from address 0 and growing with the addition of former regions as you progress through the file.

| <- File Offset -> | ||||

|---|---|---|---|---|

| 0 -> A | A -> (A+B) | (A+B) -> (A+B+C) | (A+B+C) -> (A+B+C+D) | ... |

| Region 0 (size A) | Region 1 (size B) | Region 2 (size C) | Region 3 (size D) | ... |

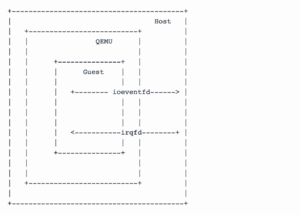

VFIO Interrupts

Guests communicate with the host via VFIO Interrupt Requests (IRQs). These are sent via an irqfd (IRQ File Descriptor). Similarly, the host receives these interrupts via eventfd (Event File Descriptor). The resulting data can be returned via a callback.

IRQs

Device properties discovered via interrupt (IOCTL).

Get Device Info

| VFIO_DEVICE_GET_INFO | ||

|---|---|---|

| struct vfio_device_info | ||

| argz | ||

| flags | ||

| VFIO_DEVICE_FLAGS_PCI | ||

| VFIO_DEVICE_FLAGS_PLATFORM | ||

| VFIO_DEVICE_FLAGS_RESET | ||

| num_irqs | ||

| num_regions | ||

The IRQ VFIO_DEVICE_GET_INFO can provide information to distinguish between PCI and platform devices as well as the number of regions and IRQs for a particular device.

The upstream API can be read here (vfio.h).

Sample mdev code to service this request can be found here, and here.

Get Region Info

| VFIO_DEVICE_GET_REGION_INFO | ||

|---|---|---|

| struct vfio_region_info | ||

| argz | ||

| cap_offset | ||

| flags | ||

| VFIO_REGION_INFO_FLAG_CAPS | ||

| VFIO_REGION_INFO_FLAG_MMAP | ||

| VFIO_REGION_INFO_FLAG_READ | ||

| VFIO_REGION_INFO_FLAG_WRITE | ||

| index | ||

| offset | ||

| size | ||

Once the interrupt user knows the number of regions within a VFIO device they can use IRQ VFIO_DEVICE_GET_REGION_INFO to probe each region for additional information. This interrupt will return information such as if it can be read from or written to, if the device supports MMAP, as well as what the offset and size of the region is within the VFIO file descriptor.

The upstream API can be read here (vfio.h).

Sample mdev code to service this request can be found here, and here.

Get IRQ Info

| VFIO_DEVICE_GET_IRQ_INFO | ||

|---|---|---|

| struct vfio_irq_info | ||

| argz | ||

| count | ||

| flags | ||

| VFIO_IRQ_INFO_AUTOMASKED | ||

| VFIO_IRQ_INFO_EVENTFD | ||

| VFIO_IRQ_INFO_MASKABLE | ||

| VFIO_IRQ_INFO_NORESIZE | ||

| index | ||

VFIO_DEVICE_GET_IRQ_INFO is used to retrieve information about a device IRQ.

VFIO_IRQ_INFO_AUTOMASKED is used to mask interrupts when they occur to protect the host.

The upstream API can be read here (vfio.h)

Sample mdev code to service this request can be found here, and here.

Set IRQs

| VFIO_DEVICE_SET_IRQS | ||

|---|---|---|

| struct vfio_irq_set | ||

| argz | ||

| count | ||

| data[] | ||

| flags | ||

| VFIO_IRQ_SET_ACTION_MASK | ||

| VFIO_IRQ_SET_ACTION_TRIGGER | ||

| VFIO_IRQ_SET_ACTION_UNMASK | ||

| VFIO_IRQ_SET_DATA_BOOL | ||

| VFIO_IRQ_SET_DATA_EVENTFD | ||

| VFIO_IRQ_SET_DATA_NONE | ||

| index | ||

| start | ||

VFIO_DEVICE_SET_IRQS is used to setup IRQs. Actions can be configured such as trigger which is when the device triggers an interrupt (IOCTL), masking and unmasking actions can be set. Bool and None data types are used for loopback testing of the device. Start and index may be used to modify subregions.

The upstream API can be read here (vfio.h).

Device Decomposure/Recomposure

Figure 5: Virtual Function IO (VFIO) devices are deconstructed in userspace into a set of VFIO primitives (MMIO pages, VFIO/IOMMU Groups, VFIO IRQs, File Descriptors). Recomposure of these devices occurs upon assignment of a Virtual Function (VF) to a QEMU virtual machine.

Memory Management Unit (MMU)

Figure 6: This section will touch upon the mechanisms used for enforcement of Host Physical Address (HPA) to Guest Physical Address (GPA) isolation.

MMIO Isolation (Platform MMU)

The platform's CPU communicates with the GPU by reading/writing to and from pinned MMIO pages in Random Access Memory (RAM). MMIO pages within the RAM are subject to IO Virtual Address (IOVA) translations by the platform's discrete MMU controller which is programmed by the CPU. These IOVA translations serve as a mechanism to enforce HPA to GPA isolation in the context of the platform.

VRAM Isolation (GPU GMMU)

The GPU core performs virtualized operations by reading/writing to and from shadow page tables in onboard Video Random Access Memory (VRAM). Shadow pages within the VRAM are subject to IO Virtual Address (IOVA) translations by the GPU's discrete GPU MMU controller (GMMU) which is programmed by the Embedded CPU (GPU co-processor). These IOVA translations serve as a mechanism to enforce HPA to GPA isolation in the context of the virtual GPUs and multi-process isolation in single user environments.

Platform MMIO <-> GPU Shadow Pages

In the context of VFIO pinned MMIO pages in RAM act as an interface to communicate with VRAM shadow pages allowing GPU drivers on the platform to send instructions to the GPU. When the GPU or Platform alters memory contained in a shadow page or pinned MMIO page the change is mirrored in the corresponding IO Virtual Address (IOVA). For example if shadow page 0 is changed by the GPU this change is mirrored in MMIO page 0 on the platform (the reverse example also applies). When communications occur between the platform and GPU the information first moves through the MMU/GMMU and is then written to RAM/VRAM.

VFIO Quirks (region traps)

Figure 7: Regions of the PCI BAR require emulation (slow path) via emulated traps (known as quirks). These regions are primarily in PCI configuration space. QEMU may overlap a region overlay which when read/written to/from triggers a VM-exit to trap and emulate the region in order that appropriate translations may occur (such as those concerned with IO Virtual Address - IOVA).

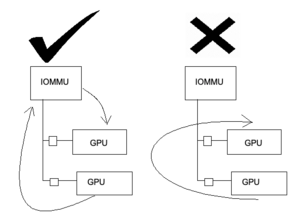

Known IOMMU Issues

DMA Aliasing

- Not all devices generate unique IDs.

- Not all devices generate IDs they should.

DMA Isolation

- Figure 8: Peer-to-Peer DMA Isolation. In many circumstances IO Virtual Address (IOVA) translations do not occur properly in the context of DMA peering. Transactions that occur through the IOMMU are unaffected.

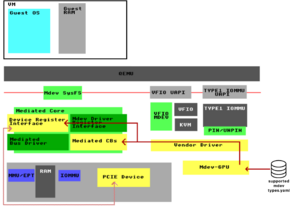

Mdev Mode

Mdev Mode (VFIO Mediated Device) is a method of virtualizing I/O devices enabling full API capabilities without the requirement for hardware assistance.

Knowledge Resources Used

This section is supported by significant contributions in open source by Neo Jia, Kirti Wankhede, Kevin Tian, Yiying Zhang, David Cowperthwaite, Kun Tian, Yaozu Dong, Tina Zhang, Gerd Hoffmann, & Zhi Wang.

See references 1, 7, 8, 17, 18, 19, 70 in the References (Talks & Reading Material) section.

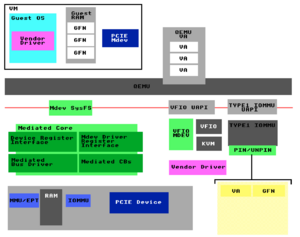

Mediated Core

The mediated device framework made 3 major changes to the VFIO driver.

- 1: Mediated core module (new)

-Mediated bus driver, create mediated device.

-Physical device interface for vendor callbacks.

-Generic Mediated device management user interface (sysfs). - 2: Mediated device module (new)

-Manage created mediated device, fully compatible with VFIO user API (UAPI). - 3: VFIO IOMMU driver (enhancement)

-VFIO IOMMU API Type1 compatible, easy to extend to non-Type1.

The full list of these changes can be seen in the lists.gnu.org Qemu-devel mailing list archive.

Device Initialization

This section will deal with how an Mdev driver is initialized.

Sample mdev code for device initialization can be found here.

Registering VFIO MDEV as a driver

VFIO Mdev must be registered as a driver. This register event occurs between the Mdev Driver Register Interface and the VFIO MDEV interface.

Registering PCIE Device with the Mediated Core

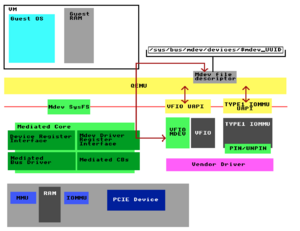

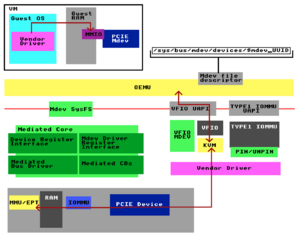

Figure 9: The vendor driver must register with the Mediated Core's Device Register Interface. The example shown uses the GVM Project's Mdev-GPU component to accomplish this step on behalf of the vendor driver.

Registering Mediated Callbacks (CBs)

Figure 9: The vendor driver must now register Mediated Callbacks which it expects to receive from Mdev devices. The example shown uses the GVM Project's Mdev-GPU component to accomplish this step on behalf of the vendor driver.

Creating a Mediated Device via mdev-sysfsdev API

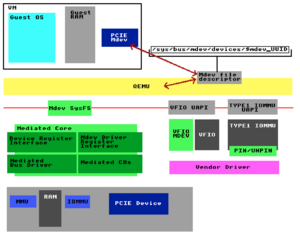

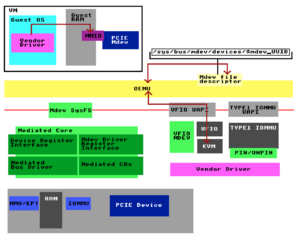

Figure 10: The user of an mdev capable device driver may echo values such as a UUID into the mdev-sysfsdev interface to create a unique mediated device. UUIDs must be unique per mdev device within a host.

QEMU adds VFIO device to IOMMU container-group

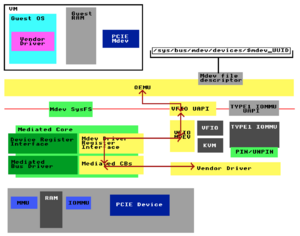

Figure 11: When starting a QEMU process with a VFIO-mdev attached QEMU calls the VFIO API to add the VFIO device to an IOMMU container/group. QEMU then runs the IOCTL to obtain a file descriptor for the device.

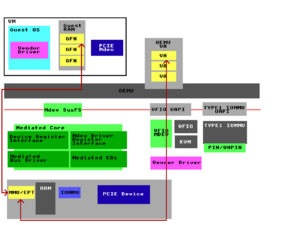

QEMU passes mdev device file descriptor to VM

Figure 12: Once QEMU has obtained the VFIO file descriptor for the Mdev device via IOCTL it is then QEMU's job to present the file descriptor into the virtual machine so that the mdev may be used by the guest.

Instruction Execution

Figure 13: Mdev Mode moves instruction information across a virtual function (VF) device using Remote Procedure Calls generally by way of software interrupt (IOCTL). Signals to and from the guest and the host GPU driver may be passed over file descriptors such as the Interrupt Request File Descriptor (irqfd) and IO Event File Descriptor (ioeventfd). The irqfd may be used to signal from the host into the guest whereas the ioeventfd may be used to signal from the guest into the host. Guest GPU instructions which would normally serialize as pRPCs (physical Remote Procedure Calls) are instead serialized from the guest as vRPCs (virtual Remote Procedure Calls) which are executed by the host mediated driver.

IRQ remapping

Interrupt Requests (IRQs) must be remapped (trapped for virtualized execution) to protect the host from sensitive instructions which may affect global memory state.

Interrupt Injection

--

--Researching--

--

Memory Management

Mdev memory management is handled by vendor driver software.

Region Passthrough

Guests may be presented with emulated memory regions or via passthrough regions or a mixture of the two such as in the case of passthrough regions with BAR 0 configuration space emulation while other regions are passthrough'd.

Emulated Regions

Emulated memory regions use indirect emulated communication requiring a VM-exit (slow). These regions are often used for virtual PCI config space such as in this sample code.

Passthrough Regions

Passthrough memory regions use direct communication requiring no VM-exit (fast).

Region Access

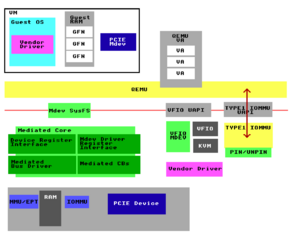

Figure 14: QEMU gets region information via VFIO User API (UAPI) from the vendor driver through VFIO-mdev and Mediated Callbacks.

Figure 15: The guest's vendor driver accesses the Mdev MMIO trapped region backed by a mdev file descriptor (fd) which triggers an Extended Page Table (EPT) violation.

Figure 16: KVM services EPT violation and forwards to QEMU VFIO PCI driver.

Figure 17: QEMU convert request from KVM to R/W access to Mdev file descriptor.

EPT Page Violations

Guest Memory Mapped IO (MMIO) trips Extended Page Table (EPT) violations which are trapped by the host MMU. KVM services EPT violations and forwards to QEMU VFIO PCI driver. QEMU then converts the request from KVM to R/W access to the Mdev File Descriptor (FD). Reads and writes are then handled by the host GPU device driver via mediated callbacks (CBs) and VFIO-mdev.

Mediated DMA Translations

--

--Researching--

--

Because memory is not statically allocated by the vendor driver under the mediated device framework there is no requirement to make use of traditional VFIO pinned pages (via the vfio-pci module) rather MMIO memory can be mapped at runtime incrementally. As a result non-standard mediated device vfio stub modules may be used.

Figure 18: Memory regions get added by QEMU.

Figure 19: QEMU calls VFIO_DMA_MAP via Memory listener. (not just guest physical memory but also device memory will be added through this memory listener)

Figure 20: Type 1 IOMMU tracks <VA, GFN (Guest Frame Number)>. We build a table to list the QEMU VAs and track the their mapping relation to Guest Frame Numbers (GFNs).

Scheduling

Scheduling is handled by the host mdev driver.

Kernel API

Kernel documentation used for implementing a VFIO Mediated Device may be found at kernel.org.

Sample Code

Sample code for various mdev implementations may be found below:

Mediated Virtual PCI Display Host Device:

Serial PCI Port-based Mediated Device:

Compilation

To compile the kernel modules linked above you should have your distro's equivalent of the build-essential package installed. The included Makefile should provide all that is necessary to successfully compile the kernel modules. You can type the following command to compile the modules from within the directory:

make

When the make operation has been completed successfully the directory will now contain .ko files. These files are the binary kernel modules.

Loading Kernel Modules

Now that you have compiled the kernel modules you may load them via the insmod command.

For example to load the mtty.ko module run the following command from within the directory where you built the modules:

insmod mtty.ko

Unloading Kernel Modules

To unload any of the kernel modules you may make use of the rmmod command.

For example to unload the mtty.ko module run the following command:

rmmod mtty.ko

Additional Documentation

An additional guide explaining how to make use of the mtty.c sample code may be found at line 308 of the kernel.org VFIO Mediated Device documentation.

Mdev Mode Requirements

Driver Support.

Software HPA<->GPA Boundary Enforcement.

SR-IOV Mode

SR-IOV Mode (Single Root I/O Virtualization) involves hardware assisted virtualization on I/O peripherals.

Knowledge Resources Used

This section is supported by significant contributions in open source by Zheng Xiao, Jerry Jiang, & Ken Xue.

See reference 6 in the References (Talks & Reading Material) section.

Instruction Execution

SR-IOV communicates instructions from a virtual function (VF) directly to the PCI BAR.

Memory Management

Guests are presenting with passthrough memory regions by the device firmware.

Scheduling

Scheduling may be handled by the host mdev driver and/or the device firmware.

SR-IOV Mode Requirements

Driver Support.

Device SR-IOV support.

Firmware HPA<->GPA Boundary Enforcement.

SIOV Mode

SIOV (Scalable I/O Virtualization) involves the combination of concepts form both SR-IOV Mode and Mdev Mode as well as novel concepts like shared IOMMU aware buffers and offloading of PCI config space VM-exits (slow path) to a discrete controller.

Revision 1.0 of the SIOV specification can be read on the Open Compute Project website.

Knowledge Resources Used

This section is supported by significant contributions in open source by Kevin Tian, Tina Zhang, Xin Zeng, Yi Liu.

See references 3, 4, 5, 16, 17, 18, 19, 20, 21 in the References (Talks & Reading Material) section.

Memory Management

Enhancements to Intel VT-d introduce new 'Scalable Mode' to allow the platform to assign more granular IOMMU allocations to mediated devices (mdev memory management). This change is referred to as an IOMMU Aware Mediated Device.

IOMMU Aware Mediated Device

SIOV made several changes to the VFIO driver, Intel IOMMU, and Mediated Device Framework.

The full list of these changes can be seen in the mailing list archive on lwn.net under (vfio/mdev: IOMMU aware mediated device).

SIOV makes use of Shared Hardware Workqueues which may be accessed by processes or Virtual Machines.

According to kernel.org: "In order to allow the hardware to distinguish the context for which work is being executed in the hardware by SWQ interface, SIOV uses Process Address Space ID (PASID), which is a 20-bit number defined by the PCIe SIG. PASID value is encoded in all transactions from the device. This allows the IOMMU to track I/O on a per-PASID granularity in addition to using the PCIe Resource Identifier (RID) which is the Bus/Device/Function."

SIOV Mode Requirements

Driver Support.

Device SIOV support.

Firmware HPA<->GPA Boundary Enforcement.

References (Talks & Reading Material)

- [2016] vGPU on KVM - A VFIO Based Framework by Neo Jia & Kirti Wankhede - slides

- [2016] An Introduction to PCI Device Assignment with VFIO by Alex Williamson - slides

- [2017] Scalable I/O Virtualization by Kevin Tian

- [2019] Bring a Scalable IOV Capable Device into Linux World by Xin Zeng & Yi Liu - slides

- Scalable I/O Virtualization Revision 1.0

- [2018] Live Migration Support for GPU with SRIOV by Zheng Xiao, Jerry Jiang & Ken Xue - slides

- [2017] Intel GVT-g: From Production to Upstream - Zhi Wang, Intel

- [2016] Qemu Graphics Update 2016 by Gerd Hoffmann

- IOCTL

- eventfd - root/virt/kvm/eventfd.c

- (kernel diff:: KVM: irqfd)

- (kernel diff:: KVM: add ioeventfd support)

- KVM irqfd & ioeventfd

- VFIO - Virtual Function I/O - root/virt/kvm/vfio.c

- VFIO Mediated Devices

- IOMMU Aware Mediated Device

- Hardware-Assisted Mediated Pass-Through with VFIO by Kevin Tian

- [2017] Generic Buffer Sharing Mechanism for Mediated Devices by Tina Zhang

- [2019] Toward a Virtualization World Built on Mediated Pass-Through - Kevin Tian

- Auxiliary Bus

- Shared Virtual Addressing

- vfio.blogspot.com

- XenGT by Kevin Tian

- VMWare Academic Publications: GPU Virtualization by Micah Dowty & Jeremy Sugerman

- PCI SIG I/O Virtualization

- VMGL by the University of Toronto Computer Science Department

- Kepler VGX Hypervisor

- Intel Virtualization Technology for Directed I/O (VT-d): Enhancing Intel platforms for efficient virtualization of I/O devices

- DMA-Mapping.txt (Dynamic DMA Mapping)

- KVM MMU Virtualization by Xiao Guangrong

- OSDEV: PCI

- Down to the TLP: How PCI express devices talk (Part I)

- Down to the TLP: How PCI express devices talk (Part II)

- Intel ACRN: Enabling SR-IOV Virtualization

- Kernel.org sysfs-bus-pci

- Linux Kernel Labs: Memory Mapping

- Inside Windows Page Frame Number (PFN) - Part 1

- Direct Rendering Infrastructure (DRI)

- DRI User Guide

- Linux Kernel Labs: IO Virtualization

- Gallium3D: Main Page

- Gallium3D Wiki

- Gallium3D talk from XDS 2007

- Memory Management High Level Design (ARCN)

- Block Architecture Diagrams for Geforce series

- Block diagrams for 40 series instruction format

- PCI Configuration Space

- GPU virtual memory in WDDM 2.0

- Intel Graphics Programmer's Reference Manuals (PRM)

- i915: Hardware Contexts (and some bits about batchbuffers)

- i915: The Global GTT Part 1

- i915: Aliasing PPGTT Part 2

- i915: True PPGTT Part 3

- i915: Future PPGTT Part 4 (Dynamic page table allocations, 64 bit address space, GPU "mirroring", and yeah, something about relocs too)

- i915: Security of the Intel Graphics Stack - Part 1 - Introduction

- i915: Security of the Intel Graphics Stack - Part 2 - FW <-> GuC

- A deep dive into QEMU: PCI slave devices

- Debugging QEMU Guests with GDB (start & stop, examine state like registers & memory, set breakpoints & watchpoints)

- IOMMU Introduction

- Arch Wiki: Intel Graphics

- An Introduction to Intel Graphics Virtualization Technology (legacy GVT-g) by Zhi Wang

- Kernel.org: vfio iommu type1: Add support for mediated devices

- Hardware and Compute Abstraction Layers For Accelerated Computing Using Graphics Hardware and Conventional CPUs

- More Shadow Walker: The Progression of TLB-Splitting on x86

- MMU Virtualization Via Intel EPT: Technical Details

- Alex Williamson Github: VFIO Development (tree next)

- GPU PMU: performance monitoring with perf event

- Remote Procedure Call (RPC)

- Virtual Open Systems: API Remoting

- A Full GPU Virtualization Solution with Mediated Pass-Through by Yiying Zhang, David Cowperthwaite, Kun Tian, and Yaozu Dong - [Paper] - [Video] - [Slides 1] - [Slides 2]

- [2014] KvmGT: A Full GPU Virtualization Solution by Jike Song

- docs.kernel.org: DRM Memory Management

- docs.kernel.org: PCI Bus Subsystem

- openglbook.com: What is OpenGL?

- [2020] Getting pixels on screen: introduction to Kernel Mode Setting (KMS) by Simon Ser - slides

- Dynamic Mediation for Live Migration VFIO-PCI

- DRM Management Via CGroups

- DRM: Add GEM ("graphics execution manager") to i915 driver.