Virtual I/O Internals

The following document will attempt to detail the internals of a Virtual Function IO (VFIO) driven Mediated Device (Mdev).

| RPC Mode | SR-IOV Mode |

|---|---|

| Host requires insight about guest of workload. | Host ignorance of guest workload. |

| Error reporting. | No guest driver error reporting. |

| In depth dynamic monitoring. | Basic dynamic monitoring. |

| Software defined MMU guest separation. | Firmware defined MMU guest separation. |

| Requires deferred instructions to be supported by host software (support libraries). | Guest is ignorant of host supported software such as support libraries. |

| Routing interrupts. | Routing interrupts. |

| Device reset. | Device reset. |

| Enable/Disable device. | Enable/Disable device. |

| Support for multiple scheduling techniques. | Support for multiple scheduling techniques. |

| Hardware support for multitasking (SR-IOV) not required. | Hardware level multitasking (SR-IOV) support required. |

Both Modes

This section will cover concepts which apply both to RPC Mode and SR-IOV Mode.

Binding VFIO devices

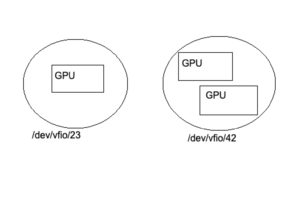

Figure 1: Binding devices to the vfio-pci driver results in VFIO group nodes.

Opening the file "/dev/vfio/vfio" creates a VFIO Container.

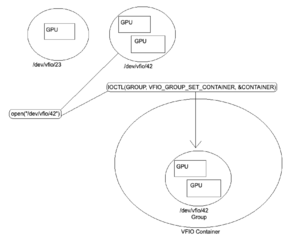

Figure 2: The interrupt routine IOCTL(GROUP, VFIO_GROUP_SET_CONTAINER, &CONTAINER) places the VFIO group inside the VFIO container.

Programming the IOMMU

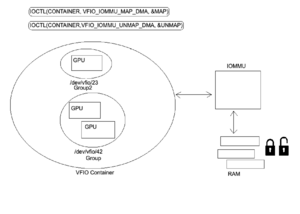

When this has been done IOCTL(CONTAINER, VFIO_SET_IOMMU, VFIO_TYPE1_IOMMU) can then be used to set an IOMMU type for the container which places it in a user interact-able state.

Once this IOMMU type state has been set and the VFIO container has been made interact-able additional VFIO groups may be added to the container without requiring that the group's IOMMU type be set again as newly added groups automatically inherit the container's IOMMU context.

VFIO Memory Mapped IO

Figure 3: Once the VFIO Groups have been placed inside the VFIO container and the IOMMU type has been set the user may then map and unmap which will automatically inserts Memory Mapped IO (MMIO) entries into the IOMMU as well as pin/unpin pages as necessary. This can be accomplished using IOCTL(CONTAINER, VFIO_IOMMU_MAP_DMA, &MAP) for map/pin and IOCTL(CONTAINER, VFIO_IOMMU_UNMAP_DMA, &UNMAP) for unmap/unpin.

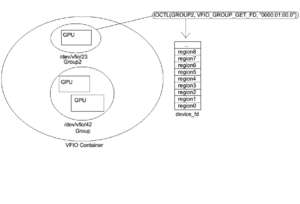

Getting the VFIO Group File Descriptor

Figure 4: Once the device has been bound to a VFIO driver, set in a VFIO container, the VFIO container has it's IOMMU type set, and a memory map/page pin of the VFIO device has been completed a file descriptor can then be obtained for the device. This file descriptor can be used for interrupts (ioctls), to probe for information about the BAR regions, and configure the IRQs.

VFIO device file descriptor

VFIO device file descriptors are divided into regions and each region is mapped into a device resource. Region count and info (file offset, allowable access, ect..) can be discovered through interrupt (IOCTL). Each file descriptor region corresponding to a PCI resource is represented as a file offset.

In the case of RPC Mode this structure is emulated whereas in SR-IOV Mode the structure is mapped to a real PCI resource.

| 00:00.0 VGA compatible controller |

|---|

| Region 0 Bar0 (starts at offset 0) |

| Region 1 Bar1 (MSI) |

| Region 2 Bar2 (MSIX) |

| Region 3 Bar3 |

| Region 4 Bar4 |

| Region 5 Bar5 (IO port space) |

| Expansion ROM |

Below is what the file offsets looks like internally for each BAR region starting from address 0 and growing with the addition of former regions as you progress through the file.

| <- File Offset -> | ||||

|---|---|---|---|---|

| 0 -> A | A -> (A+B) | (A+B) -> (A+B+C) | (A+B+C) -> (A+B+C+D) | ... |

| Region 0 (size A) | Region 1 (size B) | Region 2 (size C) | Region 3 (size D) | ... |

VFIO Interrupts

Guests communicate with the host via VFIO Interrupt Requests (IRQs). These are sent via an irqfd (IRQ File Descriptor). Similarly, the host receives these interrupts via eventfd (Event File Descriptor). The resulting data can be returned via a callback.

IRQs

Device properties discovered via interrupt (IOCTL).

Get Device Info

| VFIO_DEVICE_GET_INFO | ||

|---|---|---|

| struct vfio_device_info | ||

| argz | ||

| flags | ||

| VFIO_DEVICE_FLAGS_PCI | ||

| VFIO_DEVICE_FLAGS_PLATFORM | ||

| VFIO_DEVICE_FLAGS_RESET | ||

| num_irqs | ||

| num_regions | ||

The IRQ VFIO_DEVICE_GET_INFO can provide information to distinguish between PCI and platform devices as well as the number of regions and IRQs for a particular device.

Get Region Info

| VFIO_DEVICE_GET_REGION_INFO | ||

|---|---|---|

| struct vfio_region_info | ||

| argz | ||

| cap_offset | ||

| flags | ||

| VFIO_REGION_INFO_FLAG_CAPS | ||

| VFIO_REGION_INFO_FLAG_MMAP | ||

| VFIO_REGION_INFO_FLAG_READ | ||

| VFIO_REGION_INFO_FLAG_WRITE | ||

| index | ||

| offset | ||

| size | ||

Once the interrupt user knows the number of regions within a VFIO device they can use IRQ VFIO_DEVICE_GET_REGION_INFO to probe each region for additional information. This interrupt will return information such as if it can be read from or written to, if the device supports MMAP, as well as what the offset and size of the region is within the VFIO file descriptor.

Get IRQ Info

| VFIO_DEVICE_GET_IRQ_INFO | ||

|---|---|---|

| struct vfio_irq_info | ||

| argz | ||

| count | ||

| flags | ||

| VFIO_IRQ_INFO_AUTOMASKED | ||

| VFIO_IRQ_INFO_EVENTFD | ||

| VFIO_IRQ_INFO_MASKABLE | ||

| VFIO_IRQ_INFO_NORESIZE | ||

| index | ||

VFIO_IRQ_INFO_AUTOMASKED is used to mask interrupts when they occur to protect the host.

Set IRQs

| VFIO_DEVICE_SET_IRQS | ||

|---|---|---|

| struct vfio_irq_set | ||

| argz | ||

| count | ||

| data[] | ||

| flags | ||

| VFIO_IRQ_SET_ACTION_MASK | ||

| VFIO_IRQ_SET_ACTION_TRIGGER | ||

| VFIO_IRQ_SET_ACTION_UNMASK | ||

| VFIO_IRQ_SET_DATA_BOOL | ||

| VFIO_IRQ_SET_DATA_EVENTFD | ||

| VFIO_IRQ_SET_DATA_NONE | ||

| index | ||

| start | ||

Device Decomposure/Recomposure

Figure 5: Virtual Function IO (VFIO) devices are deconstructed in userspace into a set of VFIO primitives (MMIO pages, VFIO/IOMMU Groups, VFIO IRQs, File Descriptors). Recomposure of these devices occurs upon assignment of a Virtual Function (VF) to a QEMU virtual machine.

Memory Management Unit (MMU)

Figure 6: This section will touch upon the mechanisms used for enforcement of Host Physical Address (HPA) to Guest Physical Address (GPA) isolation.

MMIO Isolation (Platform MMU)

The platform's CPU communicates with the GPU by reading/writing to and from pinned MMIO pages in Random Access Memory (RAM). MMIO pages within the RAM are subject to IO Virtual Address (IOVA) translations by the platform's discrete MMU controller which is programmed by the CPU. These IOVA translations serve as a mechanism to enforce HPA to GPA isolation in the context of the platform.

VRAM Isolation (GPU GMMU)

The GPU core performs virtualized operations by reading/writing to and from shadow page tables in onboard Video Random Access Memory (VRAM). Shadow pages within the VRAM are subject to IO Virtual Address (IOVA) translations by the GPU's discrete GPU MMU controller (GMMU) which is programmed by the Embedded CPU (GPU co-processor). These IOVA translations serve as a mechanism to enforce HPA to GPA isolation in the context of the virtual GPUs.

Platform MMIO <-> GPU Shadow Pages

In the context of VFIO pinned MMIO pages in RAM act as an interface to communicate with VRAM shadow pages allowing GPU drivers on the platform to send instructions to the GPU. When the GPU or Platform alters memory contained in a shadow page or pinned MMIO page the change is mirrored in the corresponding IO Virtual Address (IOVA). For example if shadow page 0 is changed by the GPU this change is mirrored in MMIO page 0 on the platform (the reverse example also applies). When communications occur between the platform and GPU the information first moves through the MMU/GMMU and is then written to RAM/VRAM.

VFIO Quirks (region traps)

Known IOMMU Issues

DMA Aliasing

- Not all devices generate unique IDs.

- Not all devices generate IDs they should.

DMA Isolation

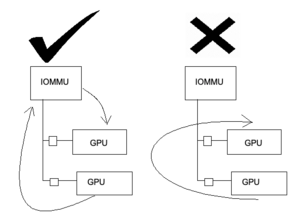

- Figure 7: Peer-to-Peer DMA Isolation. In many circumstances IO Virtual Address (IOVA) translations do not occur properly in the context of DMA peering. Transactions that occur through the IOMMU are unaffected.

RPC Mode

Instruction Execution

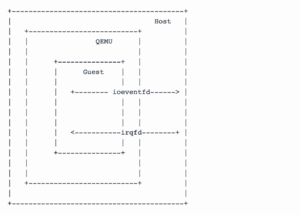

Figure 8: RPC Mode moves instruction information across a virtual function (VF) device using Remote Procedure Calls generally by way of software interrupt (IOCTL). Signals to and from the guest and the host GPU driver may be passed over file descriptors such as the Interrupt Request File Descriptor (irqfd) and IO Event File Descriptor (ioeventfd). The irqfd may be used to signal from the host into the guest whereas the ioeventfd may be used to signal from the guest into the host. Guest GPU instructions passed from the guest as Remote Procedure Calls are Just-in-time recompiled on the host for execution by a device driver.

IRQ remapping

Interrupt Requests (IRQs) must be remapped (trapped for virtualized execution) to protect the host from sensitive instructions which may affect global memory state.

Memory Management

Region Passthrough

Guests may be presented with emulated memory regions which use indirect emulated communication requiring a VM-exit (slow) or instead the guest may be presented with passthrough memory regions which use direct communication requiring no VM-exit (fast).

EPT Page Violations

Guest Memory Mapped IO (MMIO) trips Extended Page Table (EPT) violations which are trapped by the host MMU. KVM services EPT violations and forwards to QEMU VFIO PCI driver. QEMU then converts the request from KVM to R/W access to the Mdev File Descriptor (FD). Reads and writes are then handled by the host GPU device driver via mediated callbacks (CBs) and VFIO-mdev.

Scheduling

Scheduling is handled by the host mdev driver.

RPC Mode Requirements:

Sensitive Instruction List.

Instruction Shim/Binary Translator.

HPA<->GPA Boundary Enforcement.

Kernel API

Kernel documentation used for implementing a VFIO Mediated Device may be found at kernel.org.

Sample Code

Sample code for implementation of a serial PCI port-based mediated device may be found here: mtty.c. A guide explaining how to make use of the mtty.c sample code may be found at line 308 of the kernel.org VFIO Mediated Device documentation.

SR-IOV Mode

Instruction Execution

SR-IOV Mode involves the communication of instructions from a virtual function (VF) through direct communication to the PCI BAR.

Memory Management

Guests are presenting with passthrough memory regions by the device firmware.

Scheduling

Scheduling may be handled by the host mdev driver and/or the device firmware.

SR-IOV Mode Requirements:

Device SR-IOV support.

HPA<->GPA Boundary Enforcement.

Talks & Reading Material

eventfd - root/virt/kvm/eventfd.c

(kernel diff:: KVM: irqfd)

(kernel diff:: KVM: add ioeventfd support)

VFIO - Virtual Function I/O - root/virt/kvm/vfio.c

[2016] An Introduction to PCI Device Assignment with VFIO by Alex Williamson - slides

Intel GVT-g: From Production to Upstream - Zhi Wang, Intel

Hardware-Assisted Mediated Pass-Through with VFIO by Kevin Tian

[2016] vGPU on KVM - A VFIO Based Framework by Neo Jia & Kirti Wankhede

[2017] Generic Buffer Sharing Mechanism for Mediated Devices by Tina Zhang

[2019] Toward a Virtualization World Built on Mediated Pass-Through - Kevin Tian